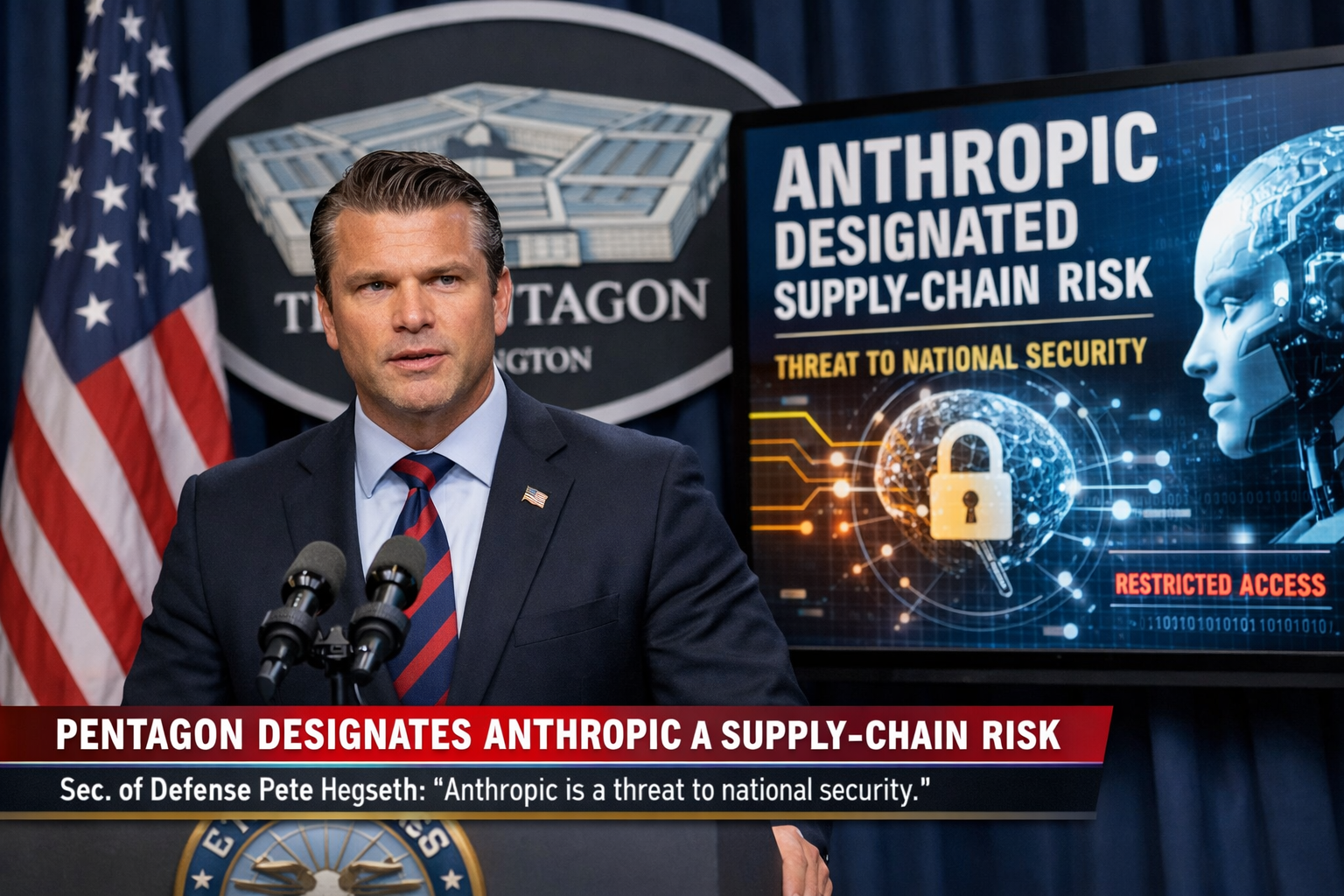

What began as a social media proclamation escalated swiftly into a significant national security designation. Secretary of Defense Pete Hegseth announced that Anthropic — the AI company behind the Claude family of models — has been formally classified as a supply-chain risk, a move that came roughly two hours after President Donald Trump declared on Truth Social that Anthropic products would be banned from federal government use.

The designation carries immediate and wide-reaching implications across the defense technology sector. Major contractors that integrate Claude into their Pentagon-facing operations — including Palantir and Amazon Web Services — now face uncertainty regarding the scope and enforcement of this classification. Whether the blacklist extends to companies that use Claude for services unrelated to national security work remains an open legal and policy question.

Anthropic has signaled that it does not intend to accept the designation without a fight. The company has indicated it is prepared to challenge the supply-chain risk classification in court, setting the stage for what could become a landmark dispute over the government's authority to restrict AI vendors on national security grounds. The outcome of any such litigation could establish important precedents for the broader AI industry's relationship with federal procurement.

The rapid sequence of events — from a presidential social media post to a formal Pentagon designation within the span of two hours — underscores the accelerating pace at which AI policy decisions are now being made at the highest levels of the executive branch. The intersection of artificial intelligence procurement, national security doctrine, and commercial technology partnerships has rarely been tested so directly or so publicly. Industry observers and legal experts are closely monitoring how the situation develops.